On October 23rd and 24th, Brighton welcomed around 5,000 people to discuss the question that’s been occupying much of the routine of SEO professionals worldwide: how should I re-orientate my strategy to boost my business’s digital visibility? More than 50 digital marketing professionals traveled to the city, located 70 kilometers from the English capital, to host a series of meetings and try to illuminate possible paths for all those who dedicate themselves daily to boosting digital visibility in times of artificial intelligence. The 2025 edition of BrightonSEO was incredible.

Throughout this detailed article, I intend to share the key insights that BrightonSEO, one of the most respected SEO events in the world, brought to professionals who, like me, had the opportunity to follow the greatest experts in implementing strategies for creating, optimizing, and measuring content for the digital ecosystem. If you haven’t been living on Mars in recent months, you already know that this strategy necessarily involves seeking mechanisms to ensure adequate visibility in search engines with artificial intelligence (like ChatGPT, Gemini, Perplexity, Copilot, and many other models based on LLM – an acronym for “Large Language Model”).

SEO (Search Engine Optimization). GEO (Generative Experience Optimization). AIO (Artificial Intelligence Optimization). Call it whatever you want. The name is merely a supporting character in this story. Whether in Google’s traditional search model with the “famous” blue links or in search engines with artificial intelligence, the foundation of a good creation and optimization strategy is valid. At the end of the day, we’re talking about the need to optimize channels to ensure good visibility in digital search engines.

The big news in all this is the transitional moment we’re going through. We’ve stopped prioritizing solely a journey focused only on user experience. Machine experience now carries even more weight in the equation. In a context where bots crawl digital channels and deliver customized content fragments, ensuring that machines can easily find information on a digital platform, has become essential in this process.

Knowing how to optimize your digital content for these two contexts is fundamental to achieving visibility in the answers presented by organic search engines. So, I want to start this article by highlighting some ideas presented in truly special debates in Brighton.

Strategies to Optimize and Boost Your Brand’s Digital Visibility

Before I begin to break down the conversations that most caught attention regarding strategies that can be implemented to optimize and boost a brand’s digital visibility, a caveat is worth noting: this article comes from a limited and subjective perspective of BrightonSEO. I have no doubt that other conversations may have explored or examined similar points.

As expected, I defined the agenda of talks I would attend based on the applicability of the topics in the context I’m involved in for Iberdrola España projects, a subsidiary of the Iberdrola Group in Spain, where I work as Web Project Manager for the country’s corporate websites. Without further ado, let’s get to what matters.

Search is everywhere. The evolution of search beyond Google: the importance of implementing an omnichannel digital marketing strategy

In a context where users’ search journey is much more complex and diverse than interaction with a single platform, strategies to optimize and boost a brand’s visibility must imperatively include presence across multiple tools.

In his talk titled “Brand, search, & social — a search everywhere trifecta,” Ashley Liddell, co-founder of Deviation agency, pointed out the multivectored nature of users’ search process. To establish an efficient strategy, it’s necessary to understand that all digital tools are channels that can be included in a user’s journey. Google, ChatGPT, Reddit, TikTok, Instagram, Pinterest, and so many other platforms mix in a completely random and chaotic way.

In this scenario, brands, in turn, need to understand this multifaceted user behavior and spare no effort to break down the barriers that exist in organizations. It’s essential to understand that strategies need to move toward a more holistic and omnichannel path.

It was this same line of reasoning that Charlie Clark defended his participation in one of the most respected SEO events in the world. The CEO of Minty Digital endorsed Ashley’s discourse by exemplifying the importance of public relations work in the digital ecosystem far beyond links. Content distribution through digital platforms is a key tool in the process of amplifying a brand’s visibility in search engines that present answers formulated with generative artificial intelligence technologies.

Jack Chambers, marketing and partnerships manager at Candour, and Nick Lafferty, head of Growth Marketing at Profound (one of the main tools in the market for measuring visibility in AI search engines), defended — in other words — the old mantra of avoiding putting all your eggs in one basket. Depositing all resources on a single platform is extremely risky. In the context of content distribution, it’s necessary to bet on content diversification and promote a strategy more aligned with omnichannel behavior, which is compatible with users’ search process.

Semantic Analysis: vector embeddings, content gap analysis, cosine similarity, and other paths to boost your content’s visibility

One of the topics that most caught my attention at BrightonSEO was the talks about the importance of using semantic analysis as a tool for defining digital content optimization strategy.

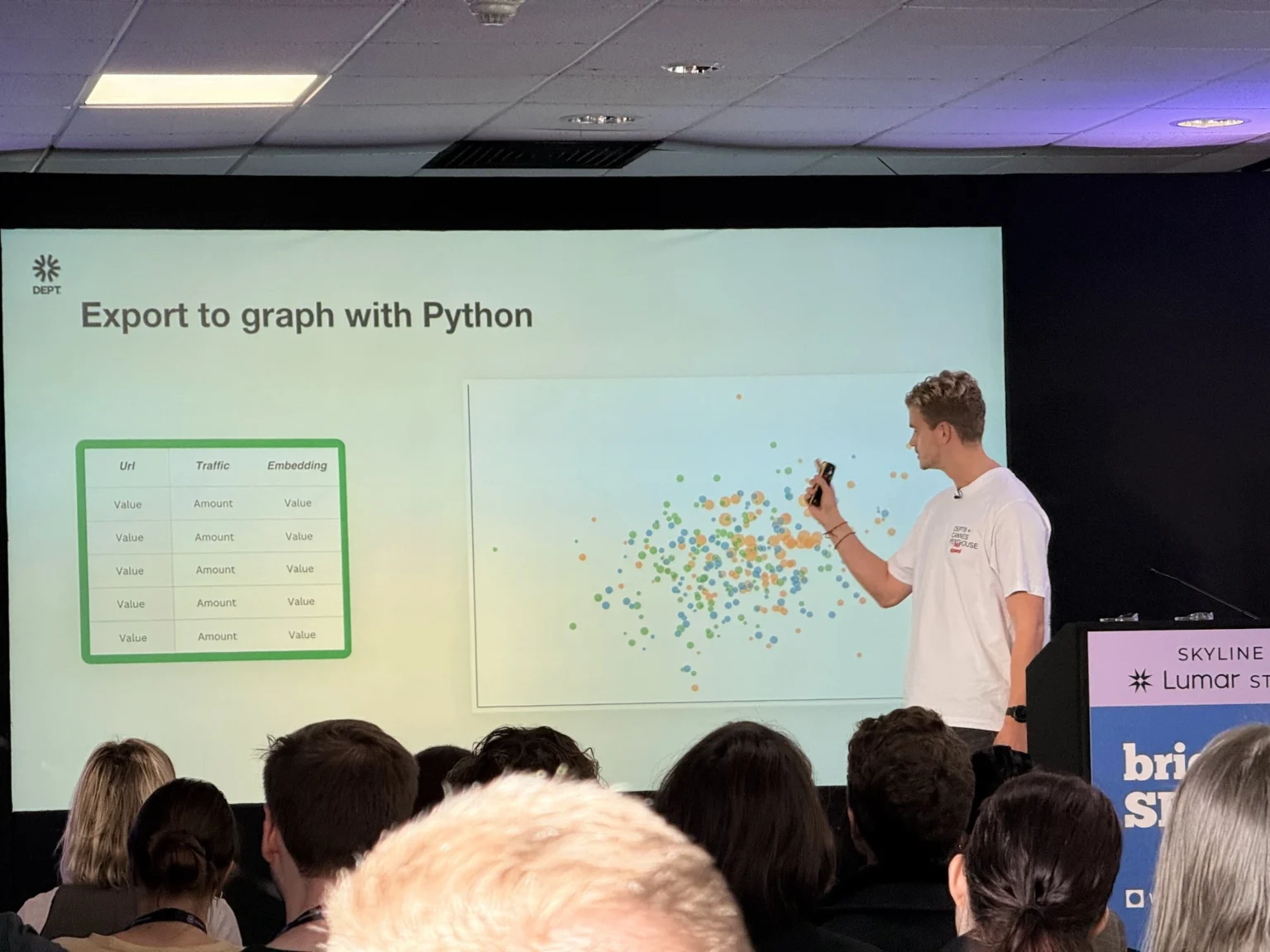

The phenomenal John Iwuozor and Frank van Dijk explored the concept of cosine similarity, a mathematical model that vectorizes content and allows identifying other content with very similar semantic value.

In other words, it’s an analysis process that transforms content into vectorized numbers. Instead of looking at how many words are the same, it looks at the general meaning, measured in vectorial models that can range from 0 to 1. Based on this indicator, it’s possible to draw a comparison with other pages that have a very similar semantic relationship and, therefore, could be optimized — pages that talk about the same topic.

It’s also in this context that Frank highlights the need for a mindset change on the part of SEO professionals, emphasizing that “the focus should be on addressing the complete search intent and not just answering specific keywords.” According to him, this model based on solving the user’s complete search intent is more efficient and aligned with how AI search engines process responses.

Along the same lines as Frank, Lazarina Stoy, an independent SEO consultant, also broke down in her talk at BrightonSEO details of her created tool (KeyBERT) to collaborate in this semantic keyword research process. Through the use of a script, her solution is capable of automatically extracting the entities and sentiments linked to content.

In the same vein as Lazarina, Ulduz Ismayllova (SEO Manager at GeoPostcodes) explained on the BrightonSEO stages how it’s possible to create a scalable platform that helps you analyze the search intent behind each query. It’s a mechanism capable of solving the problem of traditional SEO, which runs into the difficulty of understanding the nuances of search intentionality for the same keyword depending on the audience.

Wikipedia, social media and Reddit: the power of collaborative communities

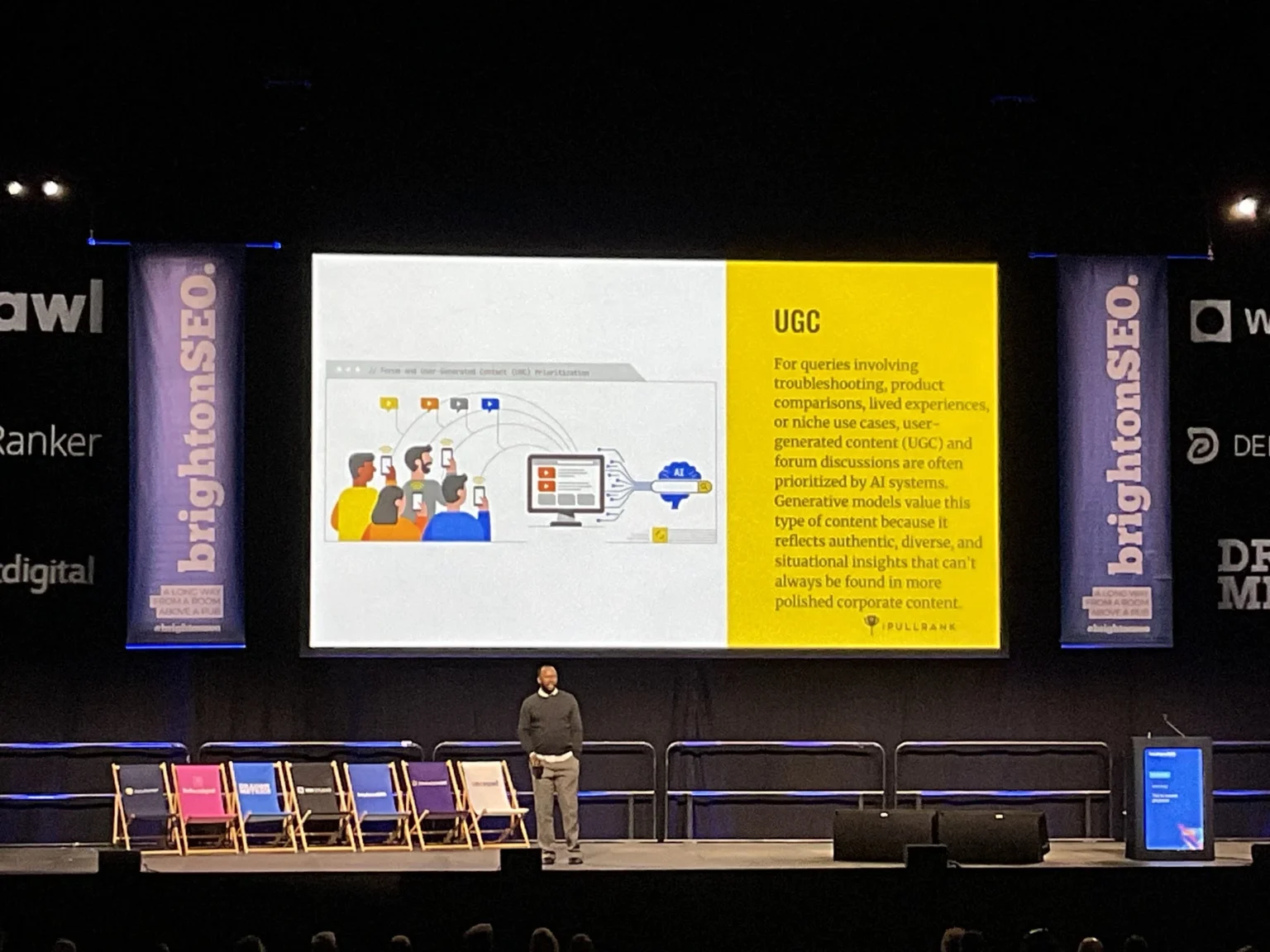

As expected, one of the topics that also gained prominence in the 2025 edition of BrightonSEO was the importance of channels like Wikipedia, social media and Reddit in your brand’s digital visibility strategy.

Nick Lafferty brought — in addition to data — some possible paths that should be considered when making a brand’s strategic decision.

In his talk “What analyzing over 250 million AI responses taught us about improving positioning on ChatGPT,” Nick highlighted that:

- Reddit is the most cited source across all AI search engines.

- YouTube, Wikipedia, and other platforms with user-generated content (the famous UGC — like Reddit and Quora) dominate citations.

- Social media and UGC represent between 12–17% of citations, depending on the AI platform.

This increasingly leads us to understand the need for a work model that requires a much more pulverized digital communication structure, where there are no barriers between press, social media, and website initiatives. Searches are everywhere and on all platforms. Therefore, actions to ensure a brand’s digital visibility must encompass all digital assets capable of delivering, in the appropriate channels and contexts, the correct answers to those users.

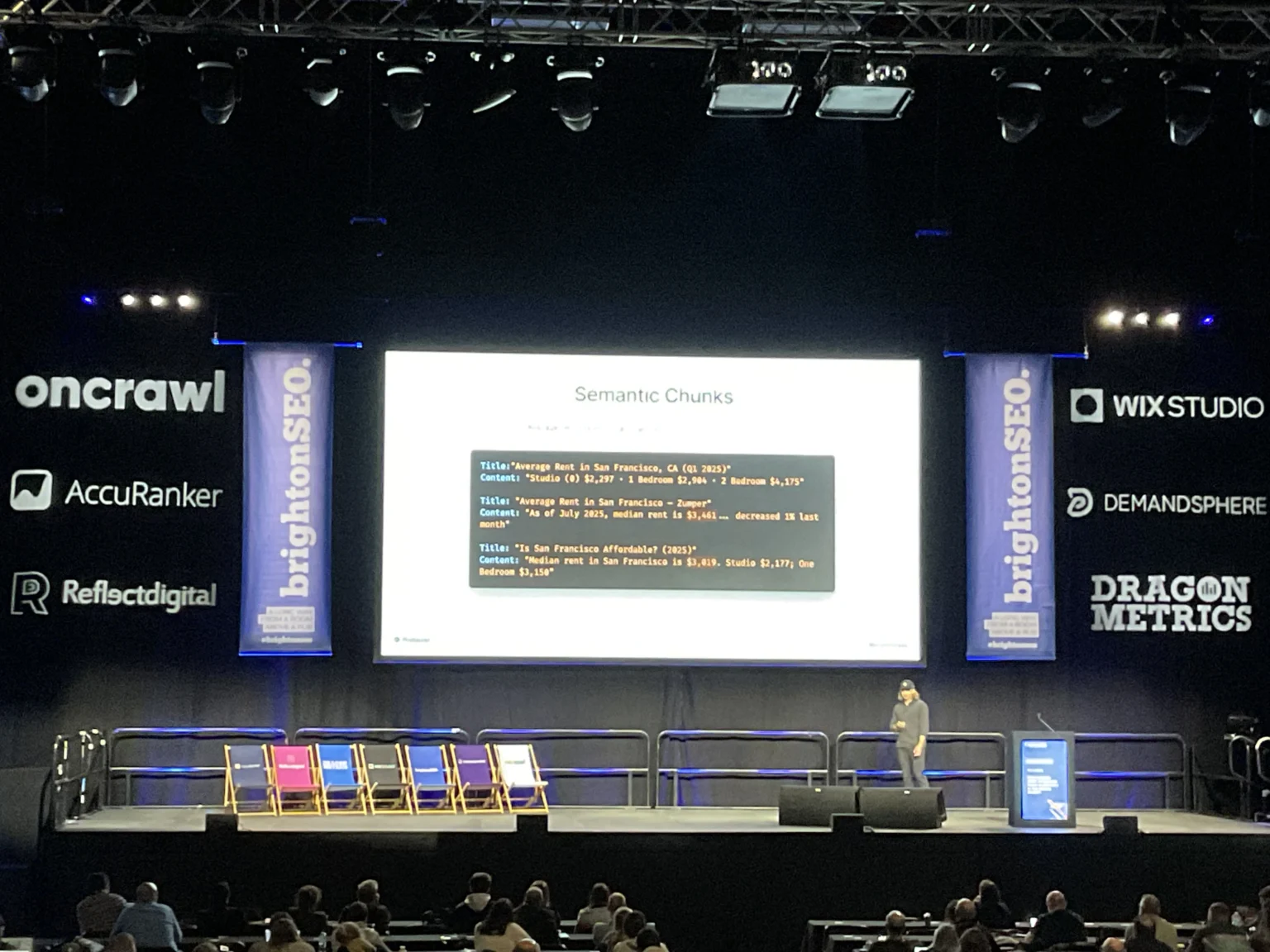

During his talk, the Profound executive also mentioned the importance of “semantic chunks” to appear in LLM responses. According to him, these structures are crucial to achieving visibility in OpenAI’s search engine. They need to deliver direct and specific information in a concise format, explicitly answering the questions users would ask ChatGPT.

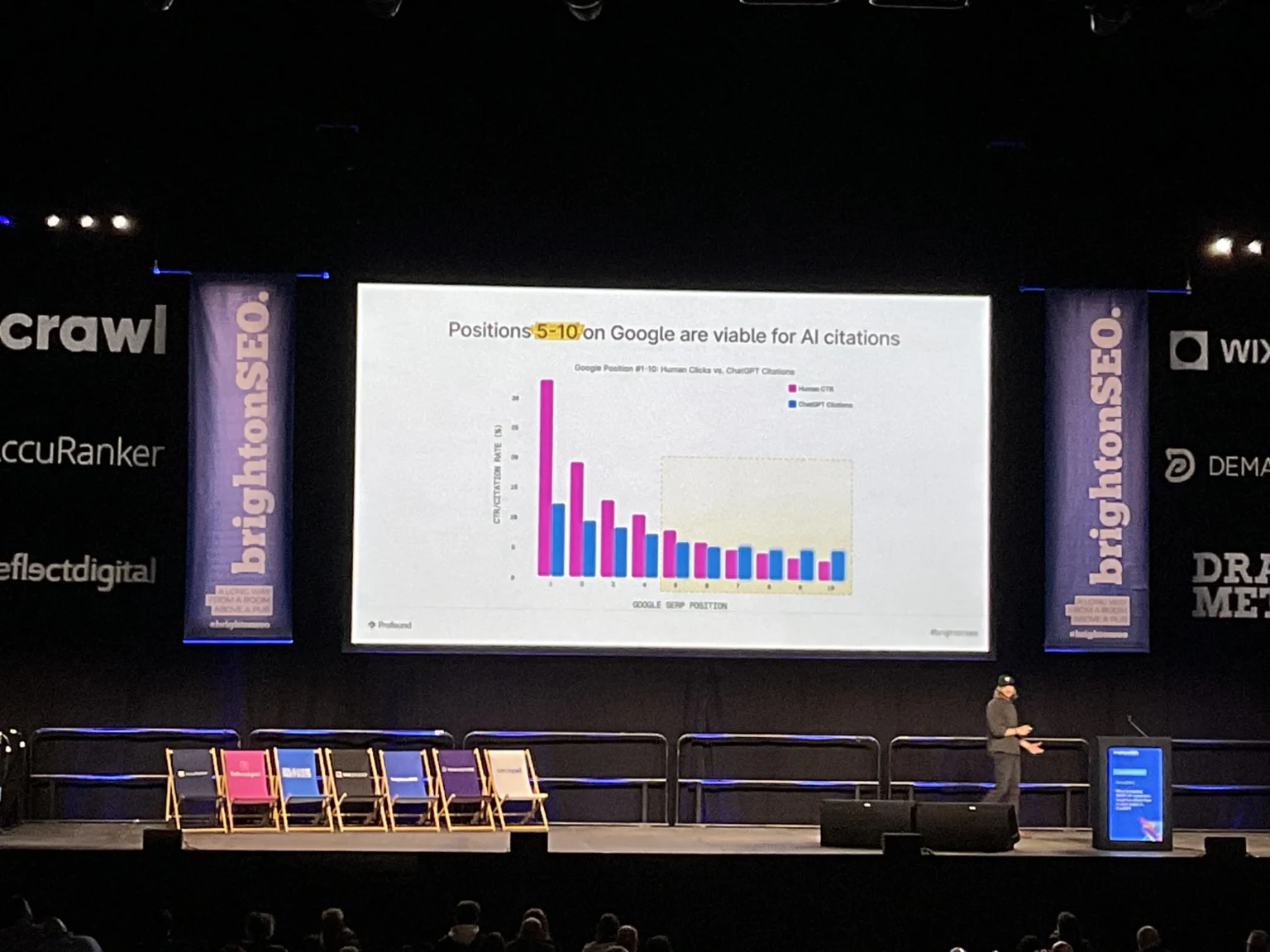

Another interesting discovery mentioned by Nick in Brighton was the relationship between the positions occupied by content on Google results pages and their appearance on ChatGPT. According to his study, pages that are between positions 5 and 10 on Google are frequently cited in AI search engines, unlike traditional SEO, where the first three pages receive more clicks.

Content Optimization for Machines: how to make your content accessible to bots in the age of AI

Although good “traditional SEO” serves as the basis for positioning in new search models based on generative responses, it’s necessary to understand how you should adapt your content so that content published by your brand can also be read by machines. Or better: in addition to providing a good experience for humans, content published on a brand’s websites must also guarantee a good experience for machines.

Digital Accessibility: ensuring an accessible and inclusive digital experience for humans and machines

Over the two days of talks in Brighton, many GEO SEO gurus shared initiatives being developed to make it easier for content to be found and indexed by bots. James Hocking, co-founder of Hocking Digital, highlighted the key role of using structured and hierarchized content. In this context, it’s essential to:

- Promote the use of structured data based on Schema documentation (a language that offers a machine-readable format that helps AI understand content clearly);

- Ensure correct heading hierarchy (H1, H2, etc.);

- And, of course, implement appropriate use of semantic HTML.

Without this proper page structuring, AI bots need to guess the structure and summarize content with limited context.

The role of schemas in generative AI responses

- Schema provides a machine-readable format that helps AI understand content clearly.

- The comparison between sites with and without schema shows that sites enhanced with schema offer more structured data for AI bots.

- Although LLMs technically “don’t care about schema,” the orchestrator does care, and schema helps pass the correct message to the LLM.

- Schema essentially provides “clearer annotations” for AI systems to understand your content.

Digital Accessibility: a competitive advantage for businesses

Another person who also highlighted the need to promote digital accessibility was Mark Walker (Head of Marketing and Portfolio at Ability Market). According to Mark, digital accessibility is not just an ethical issue, but a significant competitive advantage for companies.

In the United Kingdom, the purchasing power of people with disabilities represents £274 billion annually, with 25% of the British population identifying as having some disability. In general, all companies in Europe should understand that accessibility is particularly important in the context of the European Accessibility Act, which is already applicable to any online business serving European customers.

Mark, in addition to highlighting more practical SEO issues to promote accessible content for all types of disabilities (such as the importance of using alt text, including videos with captions, the need for colors with minimum contrast standards), went a step further and presented how accessibility can be a competitive advantage for companies from a business perspective with very emblematic examples implemented by Apple, AVIVA (an insurance company in the United Kingdom), and Primark (one of the main fashion companies in Europe).

Visibility before clicks: log analysis as a new measurement tool

Another highlight of the event in southern England that deserves mention was the various talks that explored how different companies (and sectors) are exploring the use of logs in SEO projects.

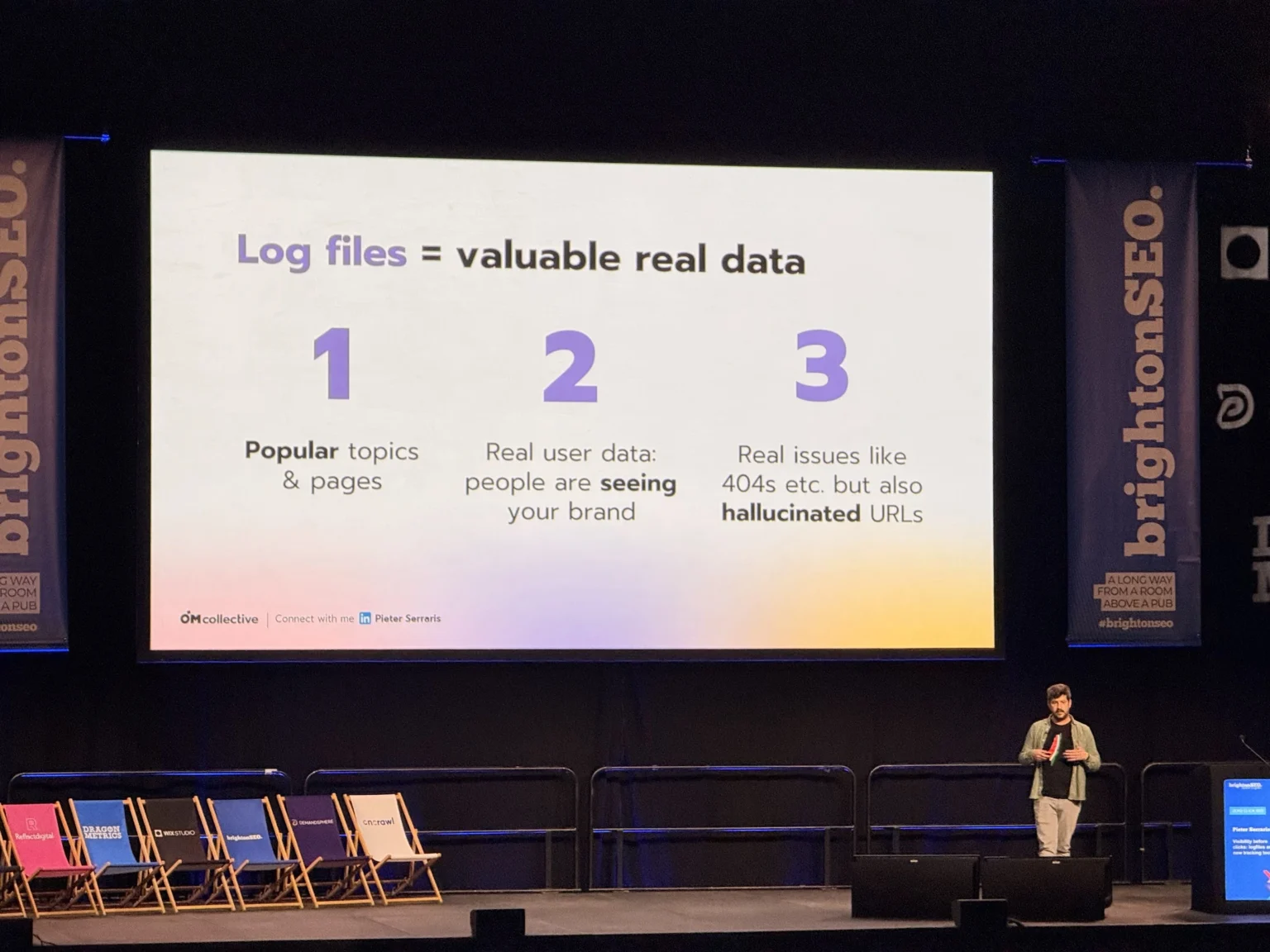

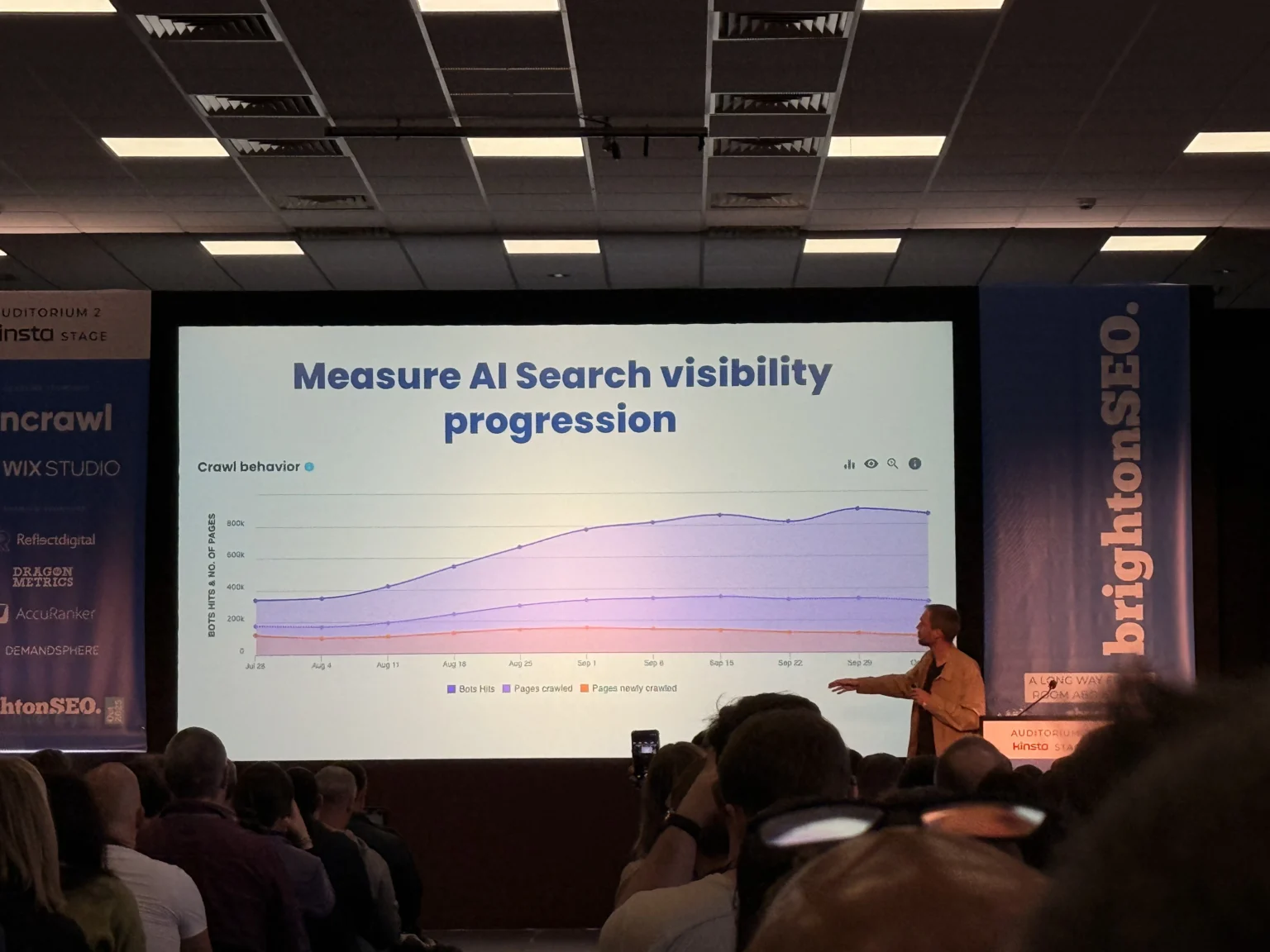

Pieter Serraris, from the Dutch digital agency OMCollective, spoke about how log analysis has been used to understand how AI bots enter the web, collect information, process published content, and deliver answers to questions asked by users.

Log files are extremely important for understanding content visibility in times of zero-click searches. They act as a “black box” for websites. They also serve to understand the activities of bots (Googlebot, Pinterestbot, etc.) and users on each web page and, mainly, to fill a gap that traditional measurement mechanisms don’t cover.

In other words, logs are important for measuring how often brands appear in ChatGPT responses and other LLMs. To make tangible how this resource can be useful for SEO projects, Pieter presented, as an example, a real project case that used logs to identify and manage Pinterest bot visits, which were quite frequent.

Jerome Salomon, the person responsible for SEO strategy at OnCrawl (one of the main technical SEO tools in the market), also illuminated possible paths that can be used to explore all the information available in logs to structure metrics and KPIs for your SEO projects. He highlighted some key points that should be included as a measurement tool for projects:

- Log analysis offers advantages over traditional analytical tools: there’s no sampling, data loss, or GDPR (General Data Protection Regulation) issues.

- Another favorable point of log analysis is that it allows identifying popular pages on Google that were never crawled by AI bots. With this information, it’s possible to optimize content to increase visibility in AI search engines.

- Unlike traditional measurement tools like Google Analytics 4, log analysis allows measuring CTR based on a more precise calculation. It considers the number of bot visits to the web server. This way, it’s possible to calculate a real conversion rate for your brand ((Clicks/Bot visits) × 100) for content used by AI.

The future of zero-click content: new paths to boost and monetize your content

You must have reached this point in the text with a question hammering in your head:

“Okay. Log analysis, digital accessibility, cosine similarity and other points that need to be considered in the new SEO context. But, more broadly, how can I prepare for the future of content in a scenario where website click metrics are starting to lose relevance?”

If there’s one clear conclusion about the future of content in an era of zero clicks searches, it’s this: there is absolutely no deterministic solution (sorry to tell you this here 😂).

There’s no black and white. We’re living in a stage where we navigate on a very gray scale. A stage where the use of artificial intelligence in search technology marked an inflection point in brand visibility in the universe of search engines.

This paradigm shift represents an evolution from a deterministic model to a probabilistic model. A transformation that has redefined how we interact with search engines. Before, we (humans) discovered information, interpreted it and consumed it. Now, agents (robots) discover and interpret so that only then can humans consume it. The predictions from the movie “Her” (2013), starring Joaquin Phoenix and Scarlett Johansson, about the future of search seem to be coming true, which many experts are already calling the era of Agent Experience (AX).

In this context, various professionals are testing solutions for LLMs based on previous experience with successful paths from traditional SEO. I’ll highlight here some paths presented in talks by Jack Chambers, James Yorke (SEO specialist at Direct Line Group insurance company), and Mike King (one of the most renowned professionals in the SEO industry and founder of iPullRank agency). They offer an excellent summary of the trajectory that brands will dedicate their efforts to achieve visibility in times of AI.

- Content creators need to diversify beyond Google. In a very chaotic context where users search in a non-linear way and on multiple platforms, it’s essential to distribute your “eggs in different baskets.” Employing efforts on a single channel is not strategic. It’s essential to bet on platforms with organic visibility like YouTube, Reddit, and TikTok.

- Transform “How to” format content into useful tools for your audience. This way, you deliver unique value that cannot be replaced by AI-generated answers. Brands like Wise, HubSpot, Shopify, Grammarly, Canva, and GoHire have adopted this strategy to gain visibility in strategic keywords. No-code or low-code tools (like Notion, Google Sheets, and Airtable) are great alternatives to build them.

- Build engaged audiences. Committed communities generate genuine brand evangelists who unconditionally support published content.

- Offering premium content is an excellent alternative to monetize digital products. Publishers of all sizes are adopting subscription models and paywalls to deliver exclusive content and additional value to their audience.

- Seeking partnerships with brands that genuinely align with your product’s values is authentic and can be an interesting and profitable path. Modest audiences can attract various sponsors seeking visibility in specific niches.

The next edition of BrightonSEO is scheduled for April 2026. Until then, there’s a lot to happen. And, truth be told, it seems to me that the October 2025 edition served to show that the market continues, in winding lines, in a direction. And, contrary to the sensationalist discourse out there, the announced “death” of SEO is nothing more than fake news. The SEO industry is very much alive. Between mistakes and successes, it continues putting into practice what has worked in the past to lay the groundwork for what also seems to work for the future of the search universe. But always adapting. This time, to the new agentic context that’s emerging in our SERP in our ChatGPT.

And, as always, we carry on!